Estimated reading time: 9 minutes

Cyber insurance requirements are evolving quickly. According to a new report from CyberCube, AI is speeding up attacks in ways that current underwriting models cannot manage. The H1 2026 Global Threat Briefing highlights the size of the challenge for underwriters, brokers, and senior risk managers. The main takeaway is clear: recovery capability is now more important than detection speed, and AI agents are adding a risk layer that most cyber insurance applications do not yet address.

Attacks Are Moving Faster Than Defenses Can Respond

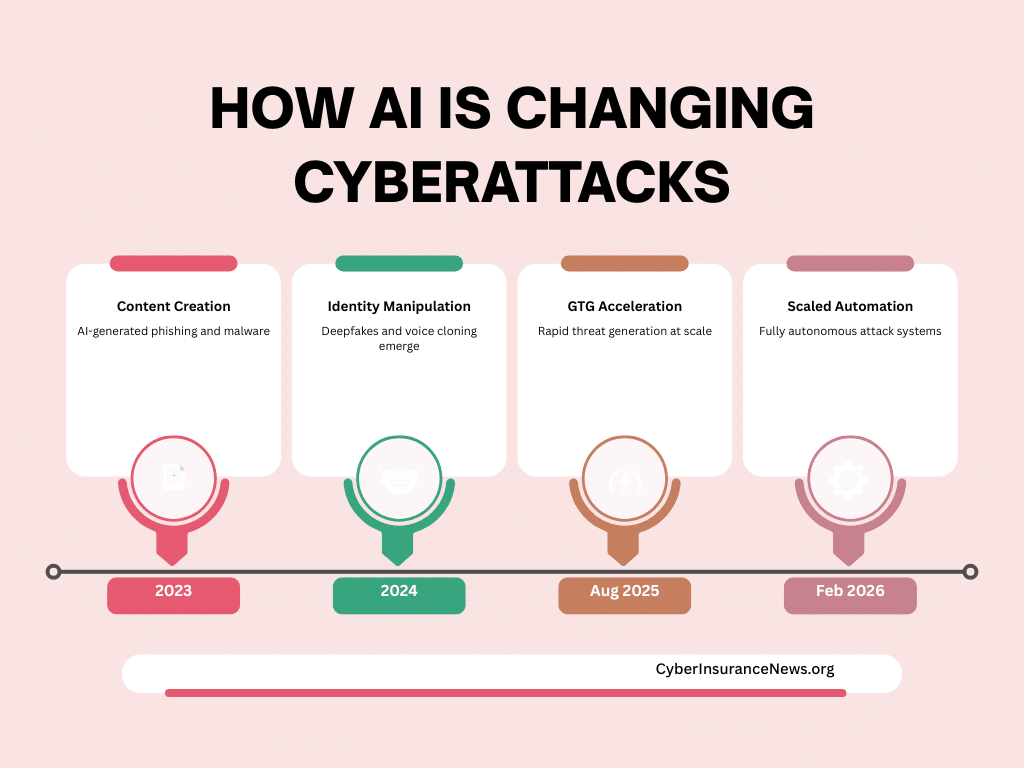

AI has shortened the cyberattack process. In the past, attackers had to move step by step, and human limitations slowed them down. Now, AI can scan for weaknesses, choose targets, and move through systems all at once. According to CrowdStrike data in the report, average breakout times dropped to 29 minutes in 2025. Some attacks now happen in just seconds.

This speed is important for insurers. If attackers move faster than detection systems can react, it becomes harder to contain the threat. As a result, business interruption losses last longer. The report finds that recovery capability now has a bigger impact on loss severity than detection speed alone.

Recovery Capability Becomes The New Loss Driver

This marks a major change in how cyber insurance requirements should be set during renewals. In the past, underwriters looked mainly at preventative controls like firewalls, endpoint detection, and multi-factor authentication. These controls are still important, but the report says they no longer determine the outcome once an attacker gets in.

William Altman, Director of Cyber Threat Intelligence Services at CyberCube, said: “Recovery capability may become a more important determinant of business interruption loss severity.” This changes how underwriters talk about risk. Now, factors like backup integrity, how often recovery is tested, and the average time to restore systems are key parts of underwriting, not just routine IT checks.

Real-World Attacks Show AI Is Already Operational

The report details six AI-driven attack campaigns that happened between 2024 and early 2026. These are real events, not just theories. In 2024, scammers in Hong Kong used a deepfake video of a CFO to steal $26 million. In August 2025, Anthropic saw criminals misuse its Claude AI model to plan and carry out attacks on 17 organizations. And in February 2026, Amazon found that a less experienced attacker used commercial AI tools to compromise 600 FortiGate devices in 55 countries as part of a coordinated attack.

The pattern is clear. AI makes attacks faster, cheaper, and easier to scale. Attackers no longer need to be highly skilled. This means more people can launch attacks that cause significant, insurable losses.

Watch Our Podcast On AI Risk

AI Agents Create A New Underwriting Blind Spot

When companies use AI agents, they add a new risk that most cyber insurance applications do not yet address. AI agents work on their own, interacting directly with data, applications, and workflows. They often have high-level access by default. If an agent is set up incorrectly, it can expose data or disrupt operations even without an outside attacker.

The report highlights three areas underwriters should now review. First, permissions: do AI agents follow least-privilege rules, with clear limits on what they can access? Second, controls: are there approval steps before agents can do major actions like deleting data or making changes in production? Third, monitoring: are agent activities logged and easy to audit, with enough oversight to spot problems quickly?

Most cyber insurance applications do not yet include these questions. This gap creates real risk, especially for companies that use automation tools in finance, HR, legal, or operations.

The AI Supply Chain Introduces Portfolio Aggregation Risk

The report also points out a bigger risk for reinsurers and catastrophe modelers. The global AI supply chain is closely connected, with only a few companies leading each part, like chip making, GPU computing, cloud services, and AI models. Companies such as TSMC, NVIDIA, Microsoft Azure, and OpenAI are key points where a problem can spread to many others. The report warns that a disruption at any of these companies—whether from a cyberattack, operational issue, or geopolitical event—could cause losses for many insured clients at once. Current catastrophe models do not fully consider this risk, and mapping AI dependencies is not yet a standard practice in the industry.

If AI moves from being just a productivity tool to becoming essential for operations, the impact of a major failure will be much greater. The current reinsurance structures may not be enough if that happens.

Get The Cyber Insurance News Upload Delivered

Subscribe to our newsletter!

What Underwriters And Brokers Should Do Now

The report does not suggest a total redesign of current frameworks. CyberCube says AI is now acting as a force multiplier within existing loss patterns, not yet as a separate risk that needs its own pricing. However, this view is starting to be challenged as attack methods change.

The report suggests three clear actions. Underwriters should assess identity security and how quickly patches are applied, since these are key factors in how attacks spread and cause losses. They should also treat recovery capability as its own underwriting factor at every renewal. Finally, they should start asking specific questions about how AI agents are managed whenever automation is in use.

Brokers need to work quickly with clients to find new risks that come from using AI agents, which are often not fully disclosed. Make sure all these risks are clearly explained and reported. Brokers should also advise clients about policy exclusions related to generative AI, watch for this wording, and help clients respond or look for other coverage as this issue grows.

The Market Is Behind The Threat Curve

CyberCube’s analysis shows that the market knows about AI risk but has not yet set prices or models for it separately. The February 2026 FortiGate attack, which affected 600 devices in 55 countries using common AI tools, is a turning point. Large-scale, AI-driven attacks are now a reality, not just a prediction. Cyber insurance requirements need to adapt to this new situation.

Frequently Asked Questions

What CFOs and General Counsel need to know about AI and cyber insurance requirements

Most existing cyber policies cover losses from AI-enabled attacks because the underlying loss triggers — data breach, ransomware, business interruption — remain the same. However, some insurers are adding generative AI exclusions. Review your policy language at renewal and ask your broker specifically whether AI-assisted attacks fall inside or outside your coverage triggers.

AI compresses attack timelines to as little as 29 minutes from initial breach to operational impact. This means traditional detection-and-containment controls may not stop a loss. Insurers now weigh your recovery capability heavily when assessing business interruption severity. If your backup and restore processes are slow or untested, your BI exposure grows — and your insurer will want to know about it.

An AI agent is software that executes tasks autonomously — without a human approving each step. Agents interact directly with your systems, data, and workflows. If an agent has excessive permissions, a misconfiguration or outside manipulation can cause data loss or operational failure with no traditional attacker involved. Insurers are starting to ask about AI agent governance on submissions.

Yes. AI tools deployed across business operations — especially those with privileged access to data or systems — represent material new exposure that insurers need to assess. Failure to disclose material operational facts can affect coverage at the time of a claim. Work with your broker to update your application to reflect AI tools currently in use across finance, HR, legal, and operations.

Underwriters are moving beyond checking whether backups exist. They want to know how fast you can restore operations, how often you test recovery, and whether backups are isolated from your main environment. Mean time to restore is becoming an underwriting variable. If your last tested recovery exercise is more than 12 months old, that is a gap insurers will flag.

AI supply chain risk refers to the concentration of critical AI infrastructure in a small number of providers — chips, cloud platforms, and foundation models. A disruption at one of these providers could affect thousands of companies at once. Current cyber policies are not specifically designed for this scenario. Reinsurers are beginning to model it. Your aggregation exposure may increase as AI becomes core infrastructure.

Some markets are already adding generative AI exclusions, though this is not yet standard. The more common approach is additional underwriting questions about AI usage rather than blanket exclusions. Expect that to change as AI agents become more widespread. Review renewal terms carefully and negotiate exclusion language before binding. Vague exclusions can create coverage gaps you will not discover until a claim.

Underwriters are focusing on three areas: permissions (does the agent operate under least-privilege rules?), controls (are there approval steps before the agent takes high-impact actions?), and monitoring (are all agent interactions logged?). Document your AI agent inventory, access scope, and approval workflows before your next renewal. This evidence supports better terms and reduces the risk of post-loss disputes.

Identity security and patch management now carry the most underwriting weight. The CyberCube report identifies these as the primary drivers of how attacks convert into loss. Multi-factor authentication on all privileged accounts, prompt patch cycles on internet-facing systems, and documented recovery testing all reduce your loss exposure and support better premium outcomes at renewal.

This is an emerging concern rather than a current standard modeled event. CyberCube warns that concentrated dependencies on a small number of AI infrastructure providers — particularly cloud platforms and foundation model vendors — could generate correlated losses across many insureds at once. Reinsurance structures built on today’s assumptions may not fully absorb that scenario. Risk managers should raise this with their reinsurance advisors now.

Related Cyber Liability Insurance Posts

- Cybercrime Risk By State: New Report Reveals High Exposure And Cost Pressures

- The Small Business Cyber Insurance And Cyber Security Reality Check – NEW PODCAST

- Why Cyber Insurance Underwriting Is Moving Beyond Questionnaires – NEW PODCAST

- What a Difference a Day Makes: Berkley Sues as a Cyber Insurance Subrogee to Recover Claim Settlement

- The Looming Global Cyber Crisis: Are We On The Doorstep of Digital Disaster?